Consistent Hashing Explained

Hey there! Today I want to dive into one of the most elegant solutions in distributed systems - consistent hashing. If you've ever wondered how Netflix, Amazon, or any large-scale system manages to distribute data across thousands of servers without everything breaking when a server goes down, you're in for a treat!

When I first encountered consistent hashing, it felt like magic. But once you understand the core concepts, you'll see it's actually a beautifully simple solution to a very complex problem. Let's break it down step by step.

The Problem: Why Traditional Hashing Breaks

Imagine you're running a caching system with 4 servers. To decide which server should store a particular piece of data, you might use a simple hash function:

// Traditional hashing approach

function getServerIndex(key, numberOfServers) {

const hash = simpleHash(key);

return hash % numberOfServers;

}

// Example usage

const servers = ['Server-0', 'Server-1', 'Server-2', 'Server-3'];

const key = "user:123";

const serverIndex = getServerIndex(key, servers.length); // Let's say this returns 2

console.log(`Key ${key} goes to ${servers[serverIndex]}`); // "Key user:123 goes to Server-2"

This works perfectly... until it doesn't. Here's what happens when Server-2 crashes:

// Oh no! Server-2 is down, now we only have 3 servers

const availableServers = ['Server-0', 'Server-1', 'Server-3'];

// Same key, but now with 3 servers instead of 4

const newServerIndex = getServerIndex(key, availableServers.length); // Now returns 1!

console.log(`Key ${key} now goes to ${availableServers[newServerIndex]}`); // "Key user:123 now goes to Server-1"

The Rehashing Nightmare

See the problem? Our key "user:123" was on Server-2, but now the hash function says it should be on Server-1. When a client tries to fetch this data, they'll look in the wrong place and get a cache miss!

In fact, when the server count changes from 4 to 3, almost ALL keys get redistributed to different servers. This means:

- Massive cache misses (your cache hit rate drops to almost zero)

- Thundering herd problem as all requests hit your database

- Potential system meltdown

This is called the "rehashing problem," and it's a nightmare for any distributed system.

Enter Consistent Hashing

Consistent hashing is like a superhero that swoops in to save the day. According to Wikipedia:

"Consistent hashing is a special kind of hashing such that when a hash table is resized and consistent hashing is used, only k/n keys need to be remapped on average, where k is the number of keys, and n is the number of slots."

In simple terms: when you add or remove servers, only a small fraction of your data needs to move. The rest stays exactly where it is!

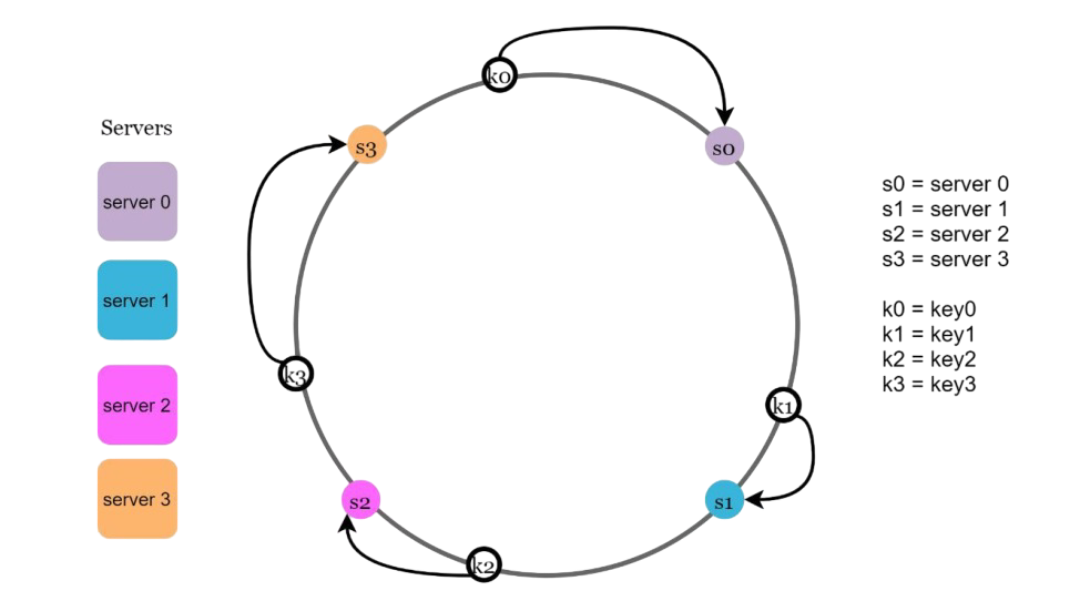

The Hash Ring Concept

Instead of thinking about servers as a simple array, consistent hashing uses a circular space called a "hash ring." Here's how it works:

- Imagine a circle (ring) with positions from 0 to 2^32 - 1

- Both servers and keys are mapped to positions on this ring using the same hash function

- To find which server stores a key, you move clockwise from the key's position until you find a server

Let me show you with code:

class ConsistentHash {

constructor() {

this.ring = new Map(); // position -> server

this.sortedPositions = []; // sorted list of server positions

}

// Simple hash function (in production, use something like SHA-1)

hash(key) {

let hash = 0;

for (let i = 0; i < key.length; i++) {

const char = key.charCodeAt(i);

hash = ((hash << 5) - hash) + char;

hash = hash & hash; // Convert to 32-bit integer

}

return Math.abs(hash);

}

addServer(server) {

const position = this.hash(server);

this.ring.set(position, server);

this.sortedPositions.push(position);

this.sortedPositions.sort((a, b) => a - b);

console.log(`Added ${server} at position ${position}`);

}

removeServer(server) {

const position = this.hash(server);

this.ring.delete(position);

this.sortedPositions = this.sortedPositions.filter(pos => pos !== position);

console.log(`Removed ${server} from position ${position}`);

}

getServer(key) {

if (this.sortedPositions.length === 0) {

throw new Error("No servers available");

}

const keyPosition = this.hash(key);

// Find the first server position >= key position (clockwise)

for (let position of this.sortedPositions) {

if (position >= keyPosition) {

return this.ring.get(position);

}

}

// If no server found clockwise, wrap around to the first server

return this.ring.get(this.sortedPositions[0]);

}

}

// Let's test it!

const hashRing = new ConsistentHash();

// Add servers

hashRing.addServer("Server-A");

hashRing.addServer("Server-B");

hashRing.addServer("Server-C");

hashRing.addServer("Server-D");

// Test key distribution

const keys = ["user:123", "user:456", "product:789", "session:abc"];

keys.forEach(key => {

console.log(`${key} -> ${hashRing.getServer(key)}`);

});

// Now remove a server and see what happens

console.log("\n--- Removing Server-B ---");

hashRing.removeServer("Server-B");

keys.forEach(key => {

console.log(`${key} -> ${hashRing.getServer(key)}`);

});

The Magic Happens

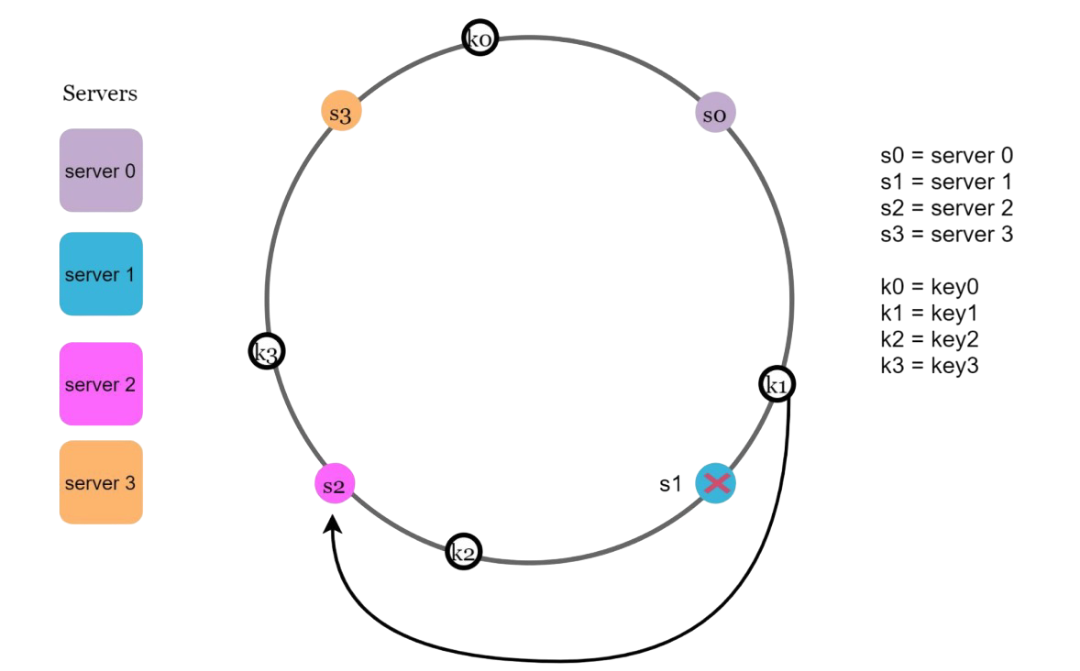

When you run this code, you'll notice something amazing: removing Server-B only affects the keys that were previously assigned to it. All other keys stay with their original servers!

This is the power of consistent hashing - minimal disruption when the cluster topology changes.

The Two Problems with Basic Consistent Hashing

While our basic implementation works, it has two major issues in real-world scenarios:

1. Uneven Partitions

Servers might not be evenly distributed around the ring. One server might handle 50% of the data while another handles only 5%. This leads to hotspots and poor load distribution.

2. Non-uniform Key Distribution

Even if servers are well-distributed, keys might cluster around certain areas of the ring, again causing uneven load.

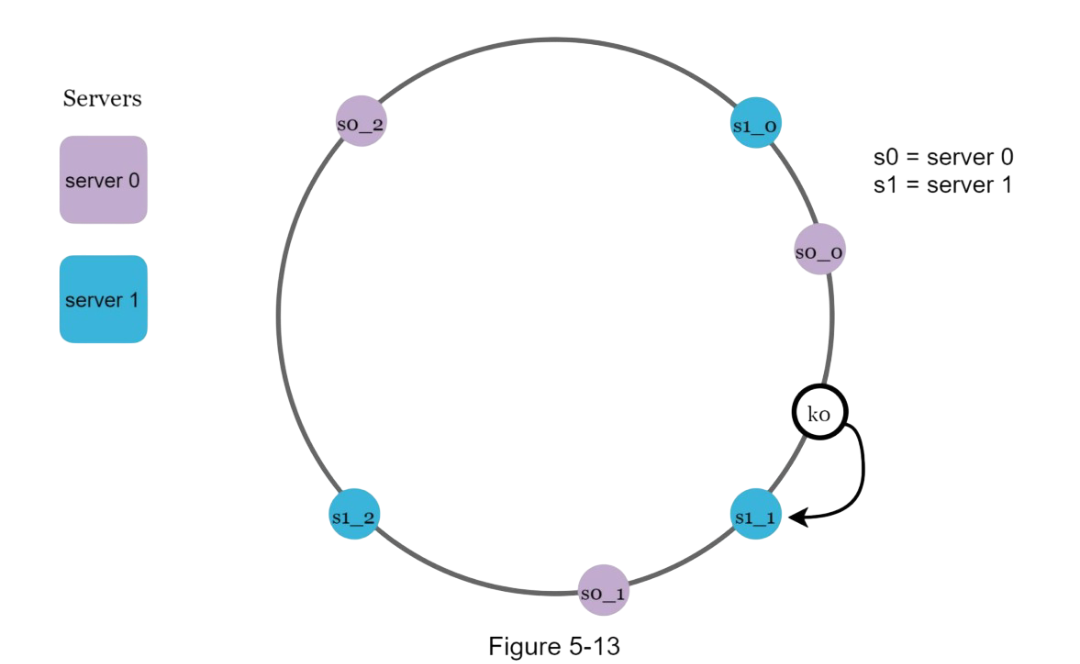

The Solution: Virtual Nodes

Virtual nodes (or replicas) are the secret sauce that makes consistent hashing truly powerful. Instead of placing each server once on the ring, we place multiple "virtual nodes" for each server.

class ConsistentHashWithVirtualNodes {

constructor(virtualNodeCount = 150) {

this.virtualNodeCount = virtualNodeCount;

this.ring = new Map(); // position -> server

this.sortedPositions = [];

}

hash(key) {

let hash = 0;

for (let i = 0; i < key.length; i++) {

const char = key.charCodeAt(i);

hash = ((hash << 5) - hash) + char;

hash = hash & hash;

}

return Math.abs(hash);

}

addServer(server) {

// Add multiple virtual nodes for this server

for (let i = 0; i < this.virtualNodeCount; i++) {

const virtualNodeKey = `${server}:${i}`;

const position = this.hash(virtualNodeKey);

this.ring.set(position, server);

this.sortedPositions.push(position);

}

this.sortedPositions.sort((a, b) => a - b);

console.log(`Added ${server} with ${this.virtualNodeCount} virtual nodes`);

}

removeServer(server) {

// Remove all virtual nodes for this server

const positionsToRemove = [];

for (let [position, serverName] of this.ring.entries()) {

if (serverName === server) {

positionsToRemove.push(position);

}

}

positionsToRemove.forEach(position => {

this.ring.delete(position);

this.sortedPositions = this.sortedPositions.filter(pos => pos !== position);

});

console.log(`Removed ${server} and its ${positionsToRemove.length} virtual nodes`);

}

getServer(key) {

if (this.sortedPositions.length === 0) {

throw new Error("No servers available");

}

const keyPosition = this.hash(key);

// Find the first server position >= key position (clockwise)

for (let position of this.sortedPositions) {

if (position >= keyPosition) {

return this.ring.get(position);

}

}

// Wrap around to the first server

return this.ring.get(this.sortedPositions[0]);

}

// Utility method to analyze distribution

analyzeDistribution(keys) {

const distribution = {};

keys.forEach(key => {

const server = this.getServer(key);

distribution[server] = (distribution[server] || 0) + 1;

});

console.log("Key distribution:", distribution);

return distribution;

}

}

// Test with virtual nodes

const hashRingVirtual = new ConsistentHashWithVirtualNodes(100);

// Add servers

hashRingVirtual.addServer("Server-A");

hashRingVirtual.addServer("Server-B");

hashRingVirtual.addServer("Server-C");

// Generate test keys

const testKeys = [];

for (let i = 0; i < 1000; i++) {

testKeys.push(`key:${i}`);

}

console.log("\n--- Distribution with Virtual Nodes ---");

hashRingVirtual.analyzeDistribution(testKeys);

// Remove a server

console.log("\n--- After removing Server-B ---");

hashRingVirtual.removeServer("Server-B");

hashRingVirtual.analyzeDistribution(testKeys);

Why Virtual Nodes Work

Virtual nodes solve both problems:

- Better distribution: With many virtual nodes per server, the load is much more evenly spread

- Reduced variance: The more virtual nodes you use, the smaller the standard deviation becomes

- Flexibility: You can assign different numbers of virtual nodes to servers based on their capacity

Real-World Implementation Considerations

When implementing consistent hashing in production, here are some key considerations:

1. Hash Function Choice

// Use a cryptographic hash function for better distribution

const crypto = require('crypto');

class ProductionConsistentHash {

hash(key) {

return crypto.createHash('sha1')

.update(key)

.digest('hex')

.substring(0, 8); // Use first 8 hex characters

}

// Convert hex string to integer

hexToInt(hex) {

return parseInt(hex, 16);

}

}2. Handling Server Weights

// Assign virtual nodes based on server capacity

addWeightedServer(server, weight = 1) {

const virtualNodes = this.virtualNodeCount * weight;

for (let i = 0; i < virtualNodes; i++) {

const virtualNodeKey = `${server}:${i}`;

const position = this.hash(virtualNodeKey);

this.ring.set(position, server);

this.sortedPositions.push(position);

}

this.sortedPositions.sort((a, b) => a - b);

console.log(`Added ${server} with weight ${weight} (${virtualNodes} virtual nodes)`);

}3. Replication Strategy

// Get N servers for replication

getServersForReplication(key, replicationFactor = 3) {

const servers = new Set();

const keyPosition = this.hash(key);

let startIndex = this.sortedPositions.findIndex(pos => pos >= keyPosition);

if (startIndex === -1) startIndex = 0;

for (let i = 0; i < this.sortedPositions.length && servers.size < replicationFactor; i++) {

const index = (startIndex + i) % this.sortedPositions.length;

const position = this.sortedPositions[index];

const server = this.ring.get(position);

servers.add(server);

}

return Array.from(servers);

}When to Use Consistent Hashing

Here's my take on when consistent hashing is the right choice:

- Distributed caching: Redis clusters, Memcached clusters

- Database sharding: When you need to distribute data across multiple databases

- Content delivery: Distribute content across CDN nodes

- Load balancing: When you want sticky sessions or stateful connections

- Peer-to-peer systems: BitTorrent, distributed hash tables

When NOT to Use It

- Simple load balancing where any server can handle any request

- Small, static server clusters that rarely change

- When you need guaranteed even distribution (consistent hashing is probabilistically even)

Performance Characteristics

Let's talk numbers:

- Time complexity: O(log N) for server lookup using binary search

- Space complexity: O(N × V) where N is servers and V is virtual nodes per server

- Redistribution: Only K/N keys move when adding/removing servers (where K is total keys)

Tuning Virtual Nodes

The number of virtual nodes is a trade-off:

- More virtual nodes: Better distribution, more memory usage

- Fewer virtual nodes: Less memory, potentially uneven distribution

In practice, 100-200 virtual nodes per server usually provides good balance. Big tech companies often use:

- Amazon DynamoDB: 128-512 virtual nodes per server

- Apache Cassandra: 256 virtual nodes per server (called "tokens")

- Redis Cluster: 16,384 hash slots distributed across nodes

Wrapping Up

Consistent hashing is one of those beautiful algorithms that solves a complex distributed systems problem with elegant simplicity. It's the backbone of many systems you use every day, from your favorite streaming service to cloud storage.

The key insights are:

- Traditional hashing breaks when server count changes

- Hash rings provide stability during topology changes

- Virtual nodes ensure even distribution and flexibility

- It's probabilistically consistent, not perfectly even

Next time you're designing a distributed system and someone asks "how do we handle data distribution when servers come and go?", you'll know exactly what to reach for.

Happy coding! 🚀

- Harsh Chauhan